Barriers to science communication: The challenges of finding high quality, accessible research

Author(s):

Alice Fleerackers

Julia Krolik

Cat Lau

Dorina Simeonov

A recent survey paints a surprising picture of the state of science in Canada. While the vast majority of Canadians — 84 percent — believe science improves their quality of life, over half feel more should be done to make scientific information understandable to the public.

This responsibility increasingly falls on scientists, who are seen as some of the most trusted sources of scientific information. Yet few receive formal training in how to share their work beyond the academic sphere, and many struggle to communicate their ideas effectively.

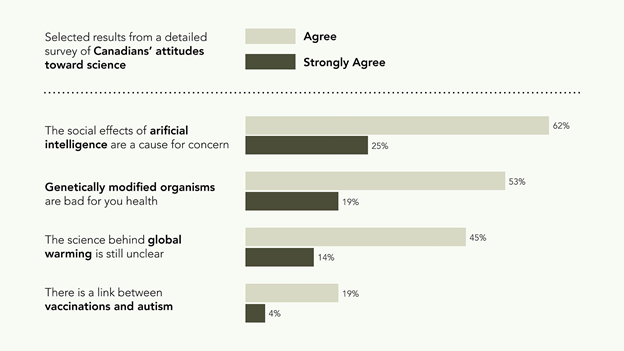

That’s why we chose to focus our CSPC panel on communicating science to nonacademic audiences. Turning to four science topics Canadians identify as concerning — vaccinations, climate change, artificial intelligence, and genetically modified organisms (GMOs) — we developed a hands-on workshop aimed at mobilizing knowledge and dispelling misperceptions about these controversial issues.

In this editorial, we share the challenges we faced in finding (and accessing) high quality research about the four topics without privileged access to academic databases, and reflect on the implications for public understanding of science.

Key science topics like vaccination, genetically modified organisms, climate change, and artificial intelligence remain controversial among Canadians. Image created by: Julia Krolik (Pixels and Plans). Adapted from John Sopinski (Globe and Mail) with data from Leger / Ontario Science Centre.

How do publics learn about science?

Given that our goal was to communicate science beyond the academic sphere, we decided to seek out research about vaccines, climate change, artificial intelligence, and GMOs as if we were interested members of the public, rather than scientists and science communicators.

We know from recent studies that publics are increasingly learning about science online, often through “layman’s” sources like news stories, science blogs, and social media posts. Although scholarly literature is not typically seen as a top source of public science information, research suggests that people unaffiliated with universities do rely on it—if they have access, that is.

With this in mind, we set aside our institutional logins, scouring the web for open access research through library repositories, preprint servers, and more. To do so, we relied on freely available search tools, like Google Scholar and PubMed, rather than paid services like Web of Science.

What did we find? Not only was conducting research this way far more challenging than using academic search tools, it also lead to many dead ends. Despite the continued growth of Open Access publishing, we came up against paywalls again and again. So much of the research we found was locked away beyond our reach.

Scientists tend to have greater access to science than most members of the public. Image created by Julia Krolik (Pixels and Plans).

What does “quality” mean, anyway?

Our selection of articles was further limited by considerations of scientific rigour. Many of the articles we found relied heavily on discipline-specific jargon and insider knowledge. Without extensive training in the topic at hand, identifying how to separate the scholarly wheat from the chaff was no simple feat.

“What counts as ‘high quality’ or ‘reliable’ research?,” we wondered. “Which articles can be trusted and which should be read more critically?”

While journal- and article- metrics are often seen as markers for research quality, their use in research evaluation has been widely critiqued. Citation counts, for example, vary dramatically from discipline to discipline, and show almost no relation to the appropriateness of an article’s statistical analysis or the quality of its reporting. The journal impact factor has similarly been deemed inappropriate for making article-level assessments of quality.

Instead, we evaluated research articles using qualitative factors:

- Participants: Was the research conducted on humans or animals? How large and representative was the sample?

- Study design: Was the study a single experiment or case study, or a randomized-controlled trial, meta-analysis, or review?

- Conflicts of interest: Who funded the research and what stake did they have in the outcomes?

- Relevance: Are the findings directly applicable to Canadians’ lives?

Finding research that matched these criteria was far more challenging — not to mention time-consuming — than simply choosing the top-cited articles for each of our four topics. But we hoped they would offer audiences a more nuanced take on the issues at hand.

Making science “public” is no easy task

What did this exercise teach us? Even though everyone on our team has research experience, we all struggled to find high quality scientific articles about our chosen topics that were publicly available. Academic papers, we discovered, are inaccessible to the public in two ways: most are paywalled, but the greater issue is the scientific jargon that makes it challenging to assess the quality of the experiments and their results.

In other words, if we truly care about making our science “public,” we need to start thinking like the publics we are trying to reach. Where do they look for answers to scientific questions? What kind of language do they use? In which formats do they prefer to receive information?

Answering these questions means exploring alternative forms of science communication that truly engage publics, such as the visual techniques we will cover in our CSPC panel Creating SciComm. By communicating our research in more accessible, understandable ways, we can build a new relationship between science and society — one that we hope will ultimately lead to a better understanding of, and ultimately trust in, science.

More on the Author(s)

Alice Fleerackers

ScholCommLab | Simon Fraser University

Lab Manager | Doctoral Student

Julia Krolik

Art the Science | Pixels and Plans

Founder

Cat Lau

CHILD-BRIGHT Network

Knowledge Translation Coordinator

Dorina Simeonov

AGE-WELL NCE Inc.

Policy and Knowledge Mobilization Manager